Computational reflections on Art, or What Art could mean to a Computer

- adam basanta

- Apr 20, 2024

- 37 min read

Updated: Feb 6, 2025

This entry is part of the Deep Objekt and Intelligence-Love-Revolution research programs, developed and led by Sepideh Majidi, with contributions from scholars such as David Roden, Francesca Ferrando, Sami Khatib, Keith Tilford, Maure Coise, Amanda Beech, Isabel Millar, Mattin,Thomas Moynihan and more. Partner Platforms: Foreign Objekt, Posthuman Art Network, Deep Objekt, The Space Gallery, UFO..

Deep Objekt [0]: miro

A colloquial explanation of ideation or spontaneous thought circa 550 BC, via the myth of the birth of Athena, erupting fully formed from the head of Zeus and exclaimed by Hephaistos. Collection of the British Museum.

All original diagrams, as well as an algorithmic essay, are available in the following link. Additional information about my research as part of the Deep Objekt [0] residency can be found here. A public draft is available here - I welcome comments, suggestions, and annotation.

The following is an in progress thought experiment. I am still thinking through these ideas and am happy to hear feedback or alternate views.

The scientific and poetic attitudes coincide: both are attitudes of research and planning, of discovery and invention. (Italo Calvino, 1962).

1. The Computational challenge

In his lecture “From Computation to Consciousness”, cognitive scientist and AI researcher Joscha Bach proposes a definition of consciousness as a virtual experience created by a set of core overlapping computational [1] functions (2015). These information processing functions fuse together discontinuous sensory experience into a seemingly-continuous “story” anchored by a representation of a protagonist, i.e. the self. Within Bach’s “physicalist computational monism”, in which the computational agent (the mind) is embedded in a physical computing universe, ‘facts’ are not perceptions of ‘things in the world’, but rather adaptive resonances between structured sensory information and inter-related computational functions. Bach suggests to recalibrate notions of ‘meaning’ and ‘truth’ as virtual “suitable encodings” (with measurable properties such as spareness, stability, adaptability, cost of acquisition etc) within a computational, and increasingly testable, context.

Fig 1 (Above): Different sets of overlapping computational functionalities underpinning various states of consciousness. Fig 2a + 2b (Below): diagrammatic representation of physicalist computationalist monism, as parallel to a “redstone computer” operating inside a Minecraft game. Source: Bach 2015.

I find a resonance between Bach’s approach and Negarastani’s notion of inhumanism as a “vector of revision” for the inherent biases limiting humanist thinking (Negarestani 2014a). As Negarestani states: “Inhumanism is exactly the activation of the revisionary program of reason against the self-portrait of humanity” (2), “to submit the presumed stability of this meaning to the perturbing and transformative power of a landscape undergoing comprehensive changes under the revisionary thrust of its ramifying destinations.” (Negarestani 2014b, 1). There seemingly would be few focal points more painful to contemplate and revise - that is, more rife with humanist preconceptions - than the very nexus of the human agent: the notion of a conscious self.

If, following Bach, we re-cast the self as an evolutionarily useful high-level representation of numerous concurrent computable functions - a “story” one tells itself about itself - perhaps Art [2] can be considered as a useful high-level representation for the many distributed functions that constitute a liberal humanist society: Art as the story liberal humanist society tells itself about itself. Through this story, Art allows us to remain dualists, or maintain a link to metaphysical notions of “human spirit” or “the divine”, even within a physicalist and scientific understanding of the world. Humanist notions of Art are a tautological self-portrait: we make art because it is essentially human, but we are human due to our ability to make art. To attempt to dismantle, or even reflect on this mythical notion of Art from a computational perspective is unpopular for a reason: it is a dismantling of a cornerstone of the de-facto underlying philosophy of our world.

Fig. 3 .The hierarchical self-image (adapted from Carver, 1996 by Pelowski and Akiba, coloured annotation added by the authour). Following the annotation, note that the the diagram is equally effective (and flattering) at attributing causality from top down (An ideal self must be creative and an art person, thus understand art, thus find meaning) and bottom up (somebody who finds meaning in art is said to understand it, which makes them an artistic and creative person, hence an ideal self. Source: Pelowski and Akiba, 2011.

2. From Colloquial to Calculable, From Calculable to Computable

The colloquial notion of Art in the legacy of Western liberal humanism is antagonistic to notions of computability: Art is subjective, up to personal interpretation, requires internal or external “inspiration” (the difference between which is suspiciously negligible). Art is a channel of communication (although to what degree is unclear) but does not abide by rules and methodologies applied to other channels of communication (by comparison, natural language seems a stable and near-lossless information exchange protocol). It is a minor miracle, given the emphasis on individual interpretation and quasi-divine characterization of experiencing or making Art, that an observer of Art experiences any kind of consistency with regard to the art they see, let alone that consensuses would develop intersubjectively between individuals disconnected in time and space about particular works, artists, movements or styles, underlying motivation and meta-creative reasoning.

The colloquial sense described above is both affirming self-narrative and outward facing public relations; it is not, at this point of historical trajectory, a result of serious inquiry on What Art Is, but rather a back and forth between bottom-up explanation of individual events according to humanist preconceptions of Art and a top-down attribution of causality from humanist preconception of Art to its constituting micro events. The persistence of this narrative is jarring in light of recent advancements in computational image-making using machine learning techniques: individual style, for instance - that amorphous quality that is simply ‘grasped’ yet also determines individual and group associations and expectations - can be calculated and even transferred [3] in a highly effective manner.

However, the deeper one digs into colloquial understanding of art, the more internal tensions are found: despite the apparent inexplicability of Art, the colloquial account does seem to suggest that some aspects of art are calculable, and that some of these aspects are commonly used to interpret art in many practical settings. Occasionally, a computational approach even seems essential to the project of Art as-defined-in-the-humanist vein.

For instance, with all other things being equal, it is generally understood that certain material aspects of a work (i.e. size, value of material, nature and scope of technical labour) will affect an artwork’s value (monetary, cultural, symbolic, etc): generally speaking (and normalizing for other variables), a bigger painting is worth more than a small painting, a sculpture cast in gold has more value than a sculpture cast in plaster. It is also generally understood that certain immaterial aspects (i.e. artist identity, time period in which the work was created, concept of the work) affect an artwork’s monetary or perceived value. Due both to the contribution of conceptual art and a global art economy, immaterial aspects of a work can have a larger effect on value than the work’s physical materiality: A painting physically identical to Mark Rothko’s “Orange and Yellow” that is not authored by Mark Rothko will not have the same value (monetary or cultural) as the original.

Left: Mark Rothko “Orange and Yellow”, 1956. Right: “89%_match: Mark Rothko ‘Orange and Yellow”, 1956”, 2019, autonomously created random image automatically matched to its closest famous artwork and published as part of the installation “All We’d Ever Need Is one Another” (2019) by Adam Basanta.

Similarly, the colloquial understanding of art education, across technical, conceptual, and art appreciation pedagogical paradigms, rely on a computational notion of art even if it does not try to account for every computation entailed. Without the notion of information processing schema which can be partially reconfigured through self-reflection or instruction, it is unclear how a student can improve in response to feedback, or how new specialist schemas can be taught or transferred, or even what a “being a good teacher” (transferring schemas and information effectively with measurable results) would mean. For instance, we are not born with knowledge about what technical aspects of painting “mean” in relation to a pointillistic painting. Through instruction, new attentional, perceptual, and relational information-schemas are created, exercised, calibrated, stored, and modified: we understand the aesthetic dimensions relevant to pointillism (“they paint with dots…”) and associates them with their conceptual correlates (“... as a response to…”). Later, we determine our own biases (opinion, taste) in relation to the learned information processing schema and learned consensus opinions. Without an assumed underlying (at minimum, partial) computational paradigm, transfer of skills and mental schemas [4] can only be explained metaphysically, although they rarely are.

Finally, colloquial reactions to art such as to “like”, “love”, “hate”, “disgust”, for Art to be “boring”, “good” or ”bad”, etc are all results (or high level representations) of comparison: an observer compares their experience to either a mental ideal (what a work should be), or other works they have previously experienced. In the case of artists, a comparison can be made not only to one’s own work, but to the entire decision-making process that led to the work’s creation (“I don’t like how this artist approaches the topic”, “what would I have done differently”).

While there are clearly variations in the results of these comparisons, they are not random. The relative consistency of opinion regarding specific artworks (“the canon”, “my favorite artwork”), and the consistency of one’s own opinions and reasoning across artworks (our preferences seem to form clusters), suggests that responses to Art rely on similar mental schemas, within which complex overlapping criteria are applied through systematic processes. Given the fairly consistent (and repeatable - we rarely hate a work we loved last month without being exposed to new information) nature of our experience with art, I will speculate that there must be an underlying, flexible criteria of comparative evaluation which integrates:

(1) evolutionarily-developed perceptual functions, alongside

(2) culturally-developed specialized cognitive functions using learned analytic vectors,

and is modulated by

(3) the collection of personal biases of the observer (aka personal taste), which

(4) corresponds roughly to a consensus-based collective criteria referred to as the Artworld(s).

Figure 4. Relationship between Colloquial, Calculable, and Computable along the axes of Analysis and Acculturation.

Have we simply forgotten the computations we make when we perceive or make art over time [5]? Acculturation fixes ongoing operating computable structures into calcullable results (“this works better than that”), and with further acculturation, the calculable aggregates ossify to colloquial understanding (“this is how it always worked”).

I propose that this process can be reversed through analysis, specifically through iterations between (a) phenomenological reflection, (b) explicit diagrammatization, and (c) mental testing, so long as the processes reflect an inhumanist perspective: that is, the analytical posture does not presuppose that art must be a result of human activity, nor its culmination, that one’s experience making and observing art are necessarily metaphysical rather than computational, or that computation is limited to the practical possibilities of modern computers.

The fear of Art being computable runs deep, and the emotional stress of such ideas may be in part in reaction to a culture in which non-enumerable and non-computable experiences are at a premium. While sharing this reticence, I do not see it as a reason to abandon the thought experiment: if Art is not computational, it will reveal itself as such. But if there is a decidedly non-computational core to our experiences with Art, it may reveal itself more accurately through the stripping away of computable layers and components, rather than assuming it in a top-down manner.

Conversely, if art is at least partially computational, the manner in which it operates should be further investigated. I suggest that a partially computational account of art can provide an additional useful heuristics with which Art can be contended, as well as alleviate some of the deep-rooted practical problems and brutal power dynamics that characterize the Artworld as a whole (more on this in section 6).

In some ways, I will be asking: if one was an Art computation machine, or if the Artworld itself was a machine that computes, how would these computations be structured? And, do these structural transcriptions bear semblance to lived observations made from an inhumanist [6] perspective? And finally, regardless of whether my claims of computation can be verified, do they provide a useful additional perspective?

3. Phenomenological reflections and Diagrammatic testing

We accept, for now, that at least some elements in Art are calculable and computable, and that due to acculturation there is an learned bias to leave such calculations undiscovered [7]. In order to uncover computational processes, I propose a recursive methodology oscillating between phenomenological reflection, diagrammatization, and mental testing.

Conscientious of potential pitfalls inherent to top down model construction (Negarestani 2023, 24-25), I anchor my approach in phenomenological reflection, followed by explicit diagrammatic transcriptions of perceived or plausible computational processes, using the functional language [8] inherent to flowcharts. The exercise of making-explicit through diagrams, and articulating computational structures “out of time”, allows a further recursion through reflection and mental testing: as I “play through” my experiences with Art (whether in real-time, or as a function of memory of previous experience), I reflect on whether or not the diagram appropriately represents a range of possible experiences (does the process accommodate multiple artworks and evaluations across different media and approaches).

In an effort to maximize recursive potential, the methodology itself can be articulated through diagrammatic language. Figures 5a to 5e illustrate an iterative expansion from a single moment of experience, through the inserting of phenomenological reflection in the experiential loop, and the subsequent addition of diagrammatization alongside its’ afforded feedback loops and relationship to temporality. Figure 5f elaborates on the evaluation of analysis, detailing the iterative processual steps which may allow continuous re-evaluation of the veracity (and efficacy) of computational procedures.

Figures 5a to 5e: expanding diagrammatic representation of methodology.

Figure 5f: diagrammatic representation of iterative approach to evaluation of reported computational approaches.

The emphasis on explicit externalization of potential computable functions through which we engage with Art is a direct extension of the inhumanist commitment. In making speculative propositions of computable structures explicit and laying them bare, we can accept, modify, or reject the proposed schemas: however, as this evaluation takes place while accounting for one’s self interest (monetary, symbolic, reputational etc), they are integrated in the computational model. The explicit externalization forces us to account for the many biases which we may not be aware of otherwise.

While the diagrams produced portray conceptual computations, and thus are not concerned with specific value ranges, precise mathematical operations [9] or calculated result. Rather, emphasis is placed on the structures through which calculability can occur. In this sense, the diagrams do not immediately transfer to operable computable structures for rigorous testing. However, it is possible that some conceptual computation structures can be applied operationally in future work (more on this in section 5B).

The diagrammatic representations that follow in sections 4 and 5 are developed through an iteration of top-down and bottom-up modelling approaches. For example, a local phenomena (i.e. evaluation of technical skill by an observer) can at first be described “from the bottom up” (or perhaps, from inside to outside); however, the local phenomena (forming an opinion on an artis’s technical skill in a specific artwork) involves the appeal to learned global criteria (what qualifies as technique, technical hierarchies and standards). This global criteria may be biased subjectively (one criteria element can be more or less important than the other), which would explain individual variation of opinion within a shared schema. But some global criteria is influenced by the aggregation of local evaluations: for instance, the importance of technical realism in painting has decreased in part because enough individuals [10] enacted alternate schema, which eventually came to be a new consensus position.

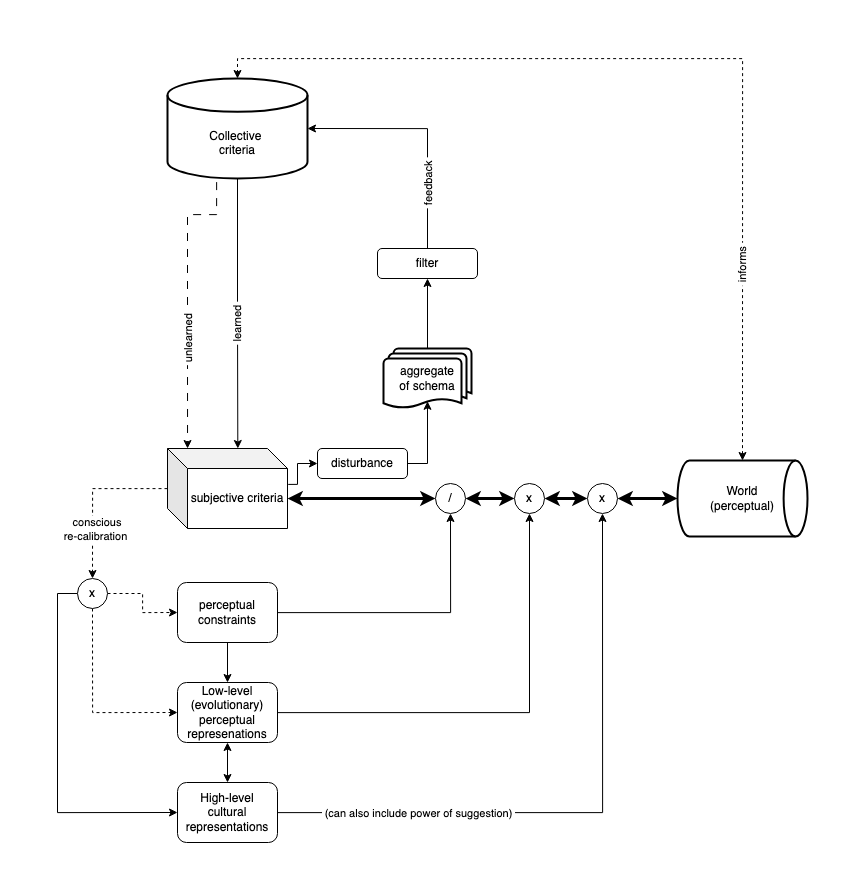

The challenge presented to computable notions of art is clear. There is a bi-directional influence between individual criteria (or schema) of interpretation (through which value is computed) and the collective [11] (cultural or subcultural) criteria of interpretation: colloquially, we learn collective criteria through official education and unofficial reinforcement bias (reacting positively or negatively to such criteria as we enact it in the world), but collective criteria itself ossifies as an aggregate of many generations of individual criteria. Beyond questions of causal order of the chicken relative to the egg, if both subjective and collective processes are computational, we must model the interaction according to the principle of true concurrency, where information and schema can flow between (and co-construct) asynchronous computational functions. A proposed computational modelling of this interaction can be seen in Figure 6, which makes this interaction explicit. The increase in line thickness in the bi-directional interactions between Observer and the Physical World (modulated by perceptual constraints, low-level, and high level abstractions) indicates perceptual flow, as opposed to the thinner lines, which indicate information flow (at a lower clock rate). All subsequent models will incorporate this subjective-collective exchange in some way.

Figure 6: representation of interaction between subjective (individual) and collective evaluation criteria. Thick lines represent perceptual flow, thin lines indicate information flow, dotted and broken lines represent potential or unconscious influence.

4. Computational Agencies and the Virtuality of Artworks

Art is exaptation of our perceptual-cognitive system: it is not the purpose for which our these systems developed, and yet we experience Art, along with the rest of the world, through them. Whatever specialized structures are specific to contemporary art, they are bootstrapped onto evolutionarily ancient structures [12]. Imagining a purely theoretical, simple computational agency that can sense an Artwork (we do not even need to consider vision here), what basic structures and pre-existing “code dependencies” would be required?

Below, in figures 7a - 7d, a representation of expanding computational sensory-cognitive [13] structures and pre-existing “library/code dependencies” are required to entertain higher level abstractions. In 7a, an agent can only sense its world and react with minimal cognitive structuring of perception (based on previous input) and adjusting its attentional focus. Its limited ability to react implies minimal sentience, but it is unable to segment the world: it remains experienced as a continuous undifferentiated sensory stream. It is perhaps similar to placing a newborn in front of an artwork, prior to the entraining of perceptual neural networks to effectively detect edges. To the newborn, not only does the artwork not exist, it can not exist. In Fig 7b, with the addition of a world-model, the agent can begin to distinguish consistencies or “things” in the world, and if the observed structure of such “things” changes with time, these changes can be anticipated, forming expectations.

Figure 7a-7b: representation of expanding computational structures and pre-existing “library dependencies” necessary to perceive an object or “thing” in the world

To “see a thing as Art”, as illustrated in figure 7c, requires a model of a subset of the world that is or can be Art, and as this is an exapted evolutionary feature, it requires consciously learned cognitive structures. However, figure 7c neglects some of the pre-existing cognitive “libraries” or underlying code structures necessary for the notion that some subset of “things” in the world are in fact Art. These “dependencies” are added in figure 7d. For one, an observed “thing”-that-is-Art necessitates an “other” to have made and declared it so, as well as a group to confers consensus on to it: for this we require a self model, and subsequently develop externalized versions to model an “other self”, structured similarly to one’s understanding of their self model [14]. As Art accumulates across generations, one requires a model of historical time, alongside increased complexity of comparison between expectation and observation. These new structures reinforce each other through information exchange and reinforcement patterns, indicated with dotted lines.

Figure 7c-7d: representation of expanding computational structures and pre-existing “library dependencies” necessary to perceive an object as an artwork

In a world ungraspable by these basic computational architectures, Art is of course not just made by an “other person”; it is made by an artist, who may have intent or reason for their creation (and this is considered in turn by the observer as part of the their experience of Art). Colloquially, artists intend certain things, and try to encode these intentions technically in a manner available to the observer, who with additional psychological priming, can decode the physical traces of intention and reconstruct their meaning mentally. If we assume no metaphysics are in play, the primary channel of communication is a locale of raw information (from which we form higher level representations) encoded in a physical media. Somehow this computational articulation seems to me more magical, more unexplainable, than the metaphysical explanation.

Figure 8 attempts to model the information flow dynamics between artist-artwork-observer, through the metaphor of prismatic refraction. In the top half of the diagram, a simplified representation of the artist iterates intended ideas through a prism - the particularities of which are formed partially by education, and may include techniques but also world view, or even metaphysical notions by the artist about their methods - which refracts the intended ideas and encodes them in a physical art object, until the observed result meets an idealized mental projection. Prismatic refraction is used as metaphor as of course it is quite unclear what exactly a “successful” (as in, readable) encoding would entail. The shape or colour of the refraction could be likened to a personalized artistic sensibility, while its consistency could be likened to a degree of artistic skill (the ability to create what you intend even with flawed heuristics).

In the bottom half, the unprimed observer encounters the (to them, unknown) Art work, forming initial impressions to construct their own prismatic lens: an imagined (and distorted) replica of the artist’s prism, through which to refract their attention and decode the relevant information. A common first impression would be “media type”: a painting might elicit a different interpretive prism than video art.

Fig 8: Encoding and decoding through the metaphor of prismatic refraction.

Applying attentional focus through the interpretive prism, the observer tries to decode information for which they do not have the exact “code”, which necessitates exploration: trying different things to observe in different ways. The mental model of the Artwork (the correlated structure of perceptual data) is probed continually, but due to the sheer amount of information encoding-structures (ways to make art) possible, the observer can only focus on a certain amount of features: only a certain amount can be “known” (as in perceived and verified) about an artwork at one moment.

To reconstruct an observer’s understanding of the Artwork from these known elements, I extend the metaphor of saccades (rapid) eye movements, which constrain us to accurately perceive only a small (rapidly moving) portion of the visual field while areas outside of the focal area are “synthesized” by the brain’s visual and anticipation systems. Figure 8 speculates that the little we “know” about a piece at any given moment, and the rapid oscillation between these morsels of knowledge is reconstructed in a (virtual) mental projection of the Artwork (note the distorted appearance of the reconstruction). It is only the mental projection of the Artwork that is accessible to further interrogation by the observer; the mental model itself is an inaccessible correlate of the sensory stream (visual patterns), while the mental projection is a reconstructed (and thus, also deconstructible) higher-ordered representations of the sensory stream (a painting of a dog). It follows that any act of evaluative comparison which would lead to an “opinion” on an Artwork, occurs solely in a virtual[15] rather than perceptual domain.

If we accept that an artwork requires encoding of information, we can further speculate on some principles of information relative to the size of encoding space. There are potentially infinite ways of encoding a potentially infinite range of information, and a potentially infinite number of axes of meaning along which information can be interpreted. Figure 9 extends the principle of spatial dimensionality to speculate on capacity and type of information which may be encoded along physical dimensions (black axes) and in-turn, higher level constructs (green axes) [16]. The diagram can be considered from the point of view of an artist (encoder) and observer (decoder).

Given the scale of possibilities, I will begin by reducing dimensionality entirely. If we imagine a potential Artwork which exists solely in a zero-dimensional space, what information could it contain? With no axis on which information can be encoded, art can only exist or not exist as discrete values: an Artwork in a zero-dimensional space is its own self-declaration (or reification) that it is, in fact, Art. In other words, the zero-dimensional space in Art is crucial (even outside the legacies of conceptual art, readymades, and text-based procedural-instruction art), as without it all further information can be (and sometimes is) interpreted as non-art.

The expansion of dimensional space and its ability to contain information, as well as the emergence of further axes of high-level concepts, is illustrated through the traditional division of visual arts to media defined by dimensionality (2d, 3d, 4d). However, the analysis presented should be taken as a metaphoric implementation of spatiality in relation to information, rather than a recipe or hierarchy of art forms according to their physical dimensional features.

Fig 9: dimensionality and its implications for (artistically-relevant) information encoding.

With every physical dimension (black axes) added, there are several virtual axes (green axes, as a mirror-image of their corresponding physical dimension axis) created. For example, an Artwork in one-dimensional space can contain points, who may have a position, and with iteration said points can form a line (continuity of points) along an infinite axis [17]. The physical parameters of location and position, for instance, create higher-level concepts (the “mirroring” green axes) like “single” or ”multiple”, or “type” (in this case, proto-colour or shade): additional axes on which difference in information can be encoded. Two separate dots on an axis may not sound particularly interesting from an artistic point of view, but the registration of (1) perceptual units who can be (2) counted and (3) compared in higher-level constructs (location, range, intensity value) represents new information content in addition to the zero-dimensional claim of the phenomena belonging to the category of Art. With the addition of a 2nd physical dimension we arrive at more recognizable results: making marks in a 2-dimensional space allows physical parameters such as shape, fill, and texture to form higher-level information axes of gesture, “thingness” and representational content, metaphor, and even proto-narrative. The 3rd physical dimension adds physical parameters such as volume, tactility, and 3-dimensional position, all of which form higher-level axes which imbue metaphors with increased ecological resonance: a thing is not only represented as hidden, it is hidden and must be discovered through change of position. Similarly, narrative can now be experienced in a lived rather than abstracted manner, through the observer’s (or artwork’s) physical movement. Adding a 4th temporal dimension fuses narrative to the lived experience of the observer: the possibilities of narrative expand as the 3D information-space is now compared to past moments and projected on to future moments.

Again, the emphasis here should not be the correlation of analytical axis to the physical dimensions nor the specific (and clearly incomplete account) perceptual information and correlating higher-level concepts. Rather, I propose more generally that (1) an Artwork contains encoding along axes of analysis, (2) that axes corresponding to low-level perceptual information spawn multiple reflective axes of higher-level concepts, and that (3) an expansion of dimensional space allows for larger amounts of information (or subsets thereof) to be encoded. The amount of information that can be encoded in a given space should not be seen as hierarchical (more is not better, and regardless, there would be hard perceptual and cognitive limits imposed) but rather as a larger space in which difference can be articulated: there are simply more possibilities that can be contained within the space. Returning to our zero-dimensional artwork, we see that it is the only Artwork that can be created in the given information space: it IS an Artwork (or it isn't, in which case, it no longer concerns us), and as BEING an artwork is all it can be, it is not possible to make an Artwork in the same space that will not be identical to the first (there are no axes on which to encode difference). The expansion of possible Artworks (the amount of informationally unique “things”) moving from zero to one-dimensional space, and following to two and three-dimensional spaces is exponential due to the creation of virtual (non-physical) axes of higher-level representations.

Given the potentially infinite axes along which multiple encodings can (and often do) overlap in Artworks, we can imagine Artwork[A] as an encoding of information along N (perceptual or conceptual) axes of interpretation: that is, as existing in an N-dimensional space (a space with N number of dimensions, corresponding to the axes on which information is inscribed in Artwork[A]). Artwork[A] itself can be conceived as an n-dimensional shape [18] in its own n-dimensional space, or as an n-dimensional shape contained within a space of all possible n-dimensions of all possible Artworks (a space of all enacted n-dimensional spaces, discarding all non-unique axes).

How would an observer form a virtual n-dimensional space within which to interpret an n-dimensional Artwork[A] and determine what is its value(s)? I have attempted to elaborate this process further in figure 10, as a modified expansion of figure 8 [19]. Excluding didactic information and “pre-fabricated” interpretation templates conferred by education, the observer seems to make educated guesses (based on memory, anticipation, and attention) based on the work’s salient features. The first proto-evaluation is in this sense, how (or along what criteria) should one evaluate this Artwork[A]? Once the salient features are gleaned, and the vectors of evaluations are mentally put in place, the observer can examine the mental projection of the artwork and observe difference in relation to other virtual entities, such as the (a) observer’s expectation relative to the (b) author’s stated intentions or explanations. This iterative process of evaluation is where specific notions of value appear. As the evaluation process is entirely virtual, it may occur without the physical artwork’s presence.

Figure 10: Increased detail of relation between advanced computing intelligence and an Artwork in a virtual realm of computation.

In this section, I have attempted to set some basic computational structures in which Artworks can be perceived as artworks, and increasingly, as certain types of artworks which require certain interpretive lenses. The following section will elaborate on the notions of value, and propose some computational structures which produce them.

5. Case-studies in Value calculation

A. General notions of value

I will approach value as a result of a calculation (mathematical notions of value), which may be projected across vectors which are collectively determined to have value (in the most general sense of use-value conferred by consensus) in some type of market (in which determination of mathematical value is contextual to their availability and sparseness, and other market-specific criteria). That is, the calculable notion of monetary value can be extended to non-monetary domains such as cultural value, symbolic value, historical value, etc [20].

Returning to colloquial understanding, an Artwork’s potential values are determined through consideration of interactions between the “facts[21] of the work”: What is the work, How was it made, Who made it, When and Where, and Why. This is, again, why a painting identical to a Rothko does not have the same value of a Rothko painting, or why two works using the same technical method can be evaluated differently based on its historical context. The Why, central in contemporary art, may be stated by the artist, experts in the field, or be comprised of “common sense”. The colloquial account is expanded on in figure 11a.

Figure 11a: elaboration of colloquial notions of value-construction based on the “facts of the work”

Figure 11b attempts to locate types of value within or across the “facts of the work”. Annotations at the top of the diagram indicate non-monetary values and annotations below the diagram indicate their monetary value correlates.

Figure 11b: locating value in colloquial notions of Art

Figure 11c expands further, tracing the interactions between “facts of the work” and places them within a computable structure which flows downwards, arriving at an overall value calculation. There is significant interaction between the colloquial elements - an identity value depends on multiple interactions between Who and When, Where, What, How, and Why. Similarly, the element How appears central to notion of contemporary artistic skill as it relates to all other elements (how was the work made, how does the context affect it, how is the why validated by the where, etc).

Figure 11c: elaboration of computational framework for value calculation

Subsequently in Figure 11d, I have replaced How with Artistic Encoding: the way in which information relating in other ”facts of the work” are encoded as available information in a work. This seems to fit with the contemporary notion that how a work is made is not simply a technical determination, but is a central element of interpretation (the artist chose this particular how for a reason). Given that criteria to evaluate artistic encoding is both subjective and informed by collective criteria, I have added these representations on the right hand of the diagram. On the left, I have modelled the dynamics of a simple market, which may be monetary or non-monetary, comprised of interactions between supply, demand, market strength (ability to manipulate value, scale), and elite strength (how much do the market elite influence the market). The colour codes reflect different mechanisms and their interactions, and the corresponding values are annotated as they move closer to a final result. We have also arrived at a working formula for artwork market value:

ArtworkMarketValue = ((ArtworkValue x ConceptValue) x ArtistValue) x (ArtistValue x MarketContext)

Figure 11d: locating artwork value in functional computational architecture, including basic subjective-collective criteria formation, and contextual determination by a market

The model above is general, but has so far seemed robust in accounting for the difference between several approaches to artworks and several value types in mental “walk-through” thought-experiments. The model’s emphasis on market determination applying to identity (the person who made the work) rather than the particularities of the Artwork is unpalatable, but seems to correspond to value (monetary or otherwise) determinations in the art market, in which artist identity seems to be the most important (and scalable) determiner of value.

B. In progress case-studies: Specialist value calculations

The following case-studies attempt to model specific evaluation subroutines. These specialized functions derive from learned cultural schema and criteria, and are likely shared across many practitioners. While the computational structures may be similar, the calculations can vary widely due to application of subjective biases. These subjective biases (how important is trait X in the work relative to trait Y) can be recalibrated relatively easily relative to the modification of the structure as a whole. The following figures are heavily in-progress and form a sparse patchwork. Future work will focus on integrating related structures together.

B.1 Technique

Technical skill is a commonly used vector of evaluation, widely accepted as a valid (and at times, principal) element in evaluation of artistic output, both within and (perhaps especially) outside the artistic milieux. In the popular imagination, level of technical skill is a nearly objective element in art (even when notable by its absence), and can be considered as an abstract value across (and relative to) various particular technical domains [22]. Technical skill is often resorted to in response to contemporary artworks when the observer does not connect or understand the artist’s conceptual reasoning (“I have no idea what this art is about, but I appreciate the technical skill or labour involved”).

Figure 12 proposes a computational subroutine for evaluation of technical skill independent of the technical media (and number of technical approaches) evident in a work. While the diagram below may need revision, it combines four types of evaluation relevant to technical skill: Level of skill (given the technique in question, thresholded and modulated by individual knowledge about said technical domain), Labour politics (who produced the work, how long did it take, in what working conditions), Conceptual integration (the use of conceptual techniques to enrich the meaning of technical skill, for instance, the artist re-using an ancestral technique), and Originality (how novel is the combination of skill, labour, and concept). Each of these independent evaluations is informed by individual knowledge, and re-scaled by individual bias (how important is originality vs labour politics, etc). Individual knowledge is informed by collective criteria, but as the results of technical evaluation can be shared as a public opinion, evaluation results may feedback to influence collective criteria.

Figure 12: proposed model for computation of technical skill in an artwork

B.2 Originality

The notion of originality is invoked as an element of evaluation in Figure 12, but may be itself unpacked computationally. For my purposes, I will not consider originality in terms of ownership (an Artwork being “of origin”, as in an “original Picasso”), but rather the colloquial notion of originality as being different from what has preceded it (and to a lesser degree, what has followed it). In this sense, originality is clearly a comparison (or ratio), but a comparison to what exactly, and in what scope?

I propose two theoretically calculable notions of originality in Figure 13: intra-oeuvre originality (how different is an artwork relative to the artist’s oeuvre) and extra-oeuvre originality (how different is an artwork relative to all artworks ever made). On the left side of the diagram, intra-oeuvre originality calculates the (1) average mathematical distance between all features of the evaluated Artworkn to the all features of every work made by the artist, as well as (2) the distance between all features of the evaluated Artworkn and a non-existing “average or typical work” by the artist (the average feature-space of all works created by the artist). These two values are themselves averaged to create an intra-oeuvre originality score (Var indicates that this may be stored as a variable). On the right side, a similar calculation occurs (average of distance comparison to other works, and distance comparison to an “average” of works) in relation to all artworks ever made. Intra and extra-oeuvre originality scores are further averaged to a total originality score, with subjective biases to determine the relative weight (or importance) of intra and extra oeuvre originality in the final calculation.

Figure 12: Proposed computational model for calculation of originality

The Euclidean[23] distance formula expressed in the bottom left corner allows the calculation of distance between two points in a n-dimensional space. Given the previous discussion of Artworks as existing in a theoretical n-dimensional space in Section 4, we can imagine an n-dimensional feature space which can account for all features (material, conceptual, contextual etc) of all artworks ever made, in which every Artwork would be represented as a point located in said space, and pairs of artworks could be compared based on their distance. For this theoretical possibility [24], I will invoke an idealized computational model which lacks perceptual, cognitive, or memory constraints, illustrated by Figure 13. Through this model, it could be theoretically possible to detect an infinite amount of perceptual and conceptual vectors in a given Workn, and mathematically encode them via a hashing function [25] in an n-dimensional space [26]. Summing every feature of a work into an updateable (given new experience or insight) temporary storage WorkHashn, the work enters permanent storage in two databases: one dedicated to all works that are self authored and the other dedicated to all works ever made. The architecture below, even in a limited practical form, would allow the originality calculations proposed above to be applied computationally.

Figure 13: Theoretical computational architecture for registration and storage of all possible features of the work for purposes of originality comparison.

B.3 Artist Reputation

Computational approaches may be particular useful to account for values which are external to the Artwork itself, but figure prominently in the Artwork’s evaluation. For example, an artist’s reputation often heavily influences the monetary and cultural value computations applied to their Artworks. Given the wealth of documented professional activities undertaken by a contemporary artist, the computation of reputation is comparatively simple to the above discussion of originality, and can be summarized as an aggregate of all professional activities (exhibitions, reviews, acquisitions, etc), each given a score relative to their context, perceived importance, and other reputational factors. Additionally, the artist’s social standing in a milieux (who do they know or associate with), and their perceived fame/infamy contextualize the aggregation of professional activities. I propose that perception of fame/infamy (on a scale of 1 being most famous, and -1 being most infamous) can be normalized an absolute value: infamy is nearly as valuable as fame, and certainly much more valuable than anonymity (score of 0). The subjective biases introduced may be modified through reflection, but I suggest several “default values” that seem to correspond to the current artworld’s priorities. While some arbitrary value assignment (i.e. how important is one museum relative to another) is required to operationalize this model, an application of this computational structure is plausible.

Figure 14: computational model to calculate an Artist’s reputation

B.4 Representation and Marginalization

Finally, the computational perspective can be applied to the realm of public funding and non-profit art policy. Unlike many of the previous models proposed, official decision-making in art policy and funding bodies in liberal democracies are literal (although often impermeable) computational system of value construction [27] with potentially measurable impact in the world. While each artist is politely lauded as a universe unto themselves, from the functional point of public policy, we are data-points [28]. A re-examination of such practices from a computational perspective is thus especially necessary. The advantages of computational audits include forcing policy-makers to explain criteria and their intended impact in detailed functional terms, and later to evaluate the intended impact against measurable results. When criteria is revealed as non-enumerable, a computational audit may force methodological re-thinking of the nature of the criteria, or be forced to articulate the non-calculable criteria elements explicitly for all to see.

Figure 15 speculates on a computational structure to calculate representation and marginalization given current trends in the artworld. In this specific case, such an approach would force policy makers to adopt clear terms with mathematical correlates, breaking down compound terms such as marginalization to (a) demographic under-representation in the arts [29] and (b) non-art related historical grievances [30]. An explicit computational framework would also necessitate policy makers to clearly identify the time-period in which marginalization or under-representation is identified, establish mathematical definitions for under-representation [31], and provide a mathematical pathway from marginalization or under-representation to non-marginalization or adequate representation [32].

While this proposal is speculative in nature and requires further contemplation, input from a variety of demographic interests, testing, and revision, it does follow some central elements of the structural logic of current progressive policies applied in art and culture in liberal democracies [33]: however, as current implementations of this computational structure are not made explicit, its results are inconsistent [34].

Figure 16 extends the calculation of representation and marginalization and offers a mathematical pathway from under-representation to adequate-representation. Again, the explicit nature of calculation interval and numerical corrective bias would force policy-makers to make specific projections for policy objectives in specific time frames, for which they could be held accountable.

Figure 15: proposed computational process to calculate an artist’s marginalization score

Figure 16: proposed mathematical pathway towards adequate representation following the logic of a liberal democracy

A final thought on this thorny problem: perhaps the disconnect between the promises of public funding structures and their implementation comes as a result of minimal resonance between a computational system (funding criteria) and non-enumerable nature of the content which they are tasked with evaluating. While I suggest the computability of art as a way to address this disconnect (in essence, public funding criteria and artistic practice would share a language, namely computation), the inverse could also apply: public funding criteria could admit that it cannot follow objective criteria guidelines, and invoke new models for support of artistic practice (such as basic universal income). The current situation, in which computation occurs but is not explicit, seems to be the worst of both worlds.

6. Art as social software and implications for power, creativity, and personal perspectives

One potential ramification of adopting (or augmenting our current approaches to include) a computational approach to Art relates to the power dynamics inherent in the Artworld. If, as I have claimed, and following from my final observations in section 5,

the artworld is collection of concurrent computational processes, iteratively performed by individual computing agencies (artists, observers, writers, etc),

and if the collectivity of the Artworld is in itself a massively distributed computable process which informs individual computations,

And if the purpose of all such computations is to produce evaluations, that is to calculate value,

And if the computational agencies (both individual and collective) in question generally reject self-characterization as computational processes,

the question arises: who benefits from such a situation and how?

Arguably, a computational value calculation that does not explicate itself as such would allow greater and more direct manipulation of its value calculations, and this access to value manipulation will concentrate in the hands of agencies with greater power. A brief look at the global Art-market would suggest that this proposed interpretation is not unreasonable [35]. A making-explicit of computational processes can possibly mitigate existing power dynamics, or at the very least, make power dynamics themselves explicit for all to see. Sunlight, after all, is said to be the best disinfectant.

The proposals made in section 4 relating to the information content of art, and the possibilities of encoding given a limited information space, also carry several implications for artists. While the notion of art as an algorithmic manipulation of parameters may seem to indicate a theoretically fixed number of possibilities for art making, the vastness of the space we do have should not be taken for granted. However, the notion of manipulating the same parameters with slight variations, resulting in a spatial cluster called “style” or “artistic voice”, should be seriously considered in light of its resonance with market economics and personal branding. This may require a difficult discussion of what exactly is meant by originality (as suggested in section 5B) or perhaps a redefinition of creativity as a whole. Even a theoretically calculable measure of originality may force the admission that what we currently value in Artworks in terms of originality is more akin to parametric deviation from venerated models of value-encoding than paradigm-shifting innovation [36]. Conversely, if we would like to encourage diversity of artworks, we may choose to assign originality-as-difference higher subjective biases than we currently do.

Finally, If Art is a process of simultaneous computable processes occurring individually, inter-subjectively, and collectively, then it is a social software. As software, it can be investigated, debugged, re-calibrated (incremental change), or re-written (structural change). This perspective has implications for all involved in art, in the political sense described above, but also in relation to personal politics of the art observer as they navigate between subjective and collective evaluation criteria for different forms of Art.

As discussed earlier, art genres become entrenched through repetition and pedagogy, and as individual evaluation schemas for specific art genres aggregate, they can be conceived as “code libraries” of appropriate calculations for a given input class: as a knowledgeable art observer, I interpret an abstract painting with a different mental schema then a political community art project [37]. Throughout the process of genrefication, code libraries combine to create “software suites” appropriate for certain art genres. These software suites can be swapped and re-loaded based on what we perceive is important (as suggested previously, these clues are gleaned from salient features, priming, expectation, and additional didactic information).

We can imagine the specialized software suites being loaded, swapped, and run on an operating system called Art-as-OS, which normalizes their individual calculations through a general notion of value (this may just be a determination that “I like” something, or that it is “of value”), allowing comparison of results between software suites outputs (“I like this painting, but I like that sculpture more”). Art-as-OS determines the zero-dimensional notion of art (“is it or it isn’t art”) and perhaps even structures this determination: a readymade in a non-artistic context is just an object, but while viewed through the operating system which is Art, it is most certainly an Artwork. Figure 17 attempts to model an observer’s engagement with art through this metaphor. Returning to Joscha Bach’s notions with which I began my inquiry, Art-as-OS allows us to begin moving away from notion of art being “good” or “bad”, something that i like or dislike, and rather move towards the notion of Artwork being a suitable (or meaningful) encoding in a particular computational context.

Figure 17: Art-as-OS (Operating System), medium and genre-specific conventions as swappable software suites of parametric analysis.

On a personal, and distinctly human note, I have attempted to use the notion of Art-as-OS while engaging with art students whose media, stated goals, aesthetics, and intentions are quite different than my own. To help them achieve their goals - that is, to help them become a “better” artist in whatever way is meaningful for them - I am required to load specific software suites which relate to their intentions, goals, and chosen media and try to interpret their work, methods, and techniques through this lens. I have found this approach a useful pedagogical aid which allows me to give feedback informed by my opinions and taste, but not enslaved to them. I do not feel that it has made the process of art making, observing, or teaching any less magical; perhaps the notion of computability only enhances the magical un-explainability of what Art is and can do.

Footnotes

[1] Computation in this context is defined as a consistent operationalization of mathematical principles.

[2] I will capitalize the word Art when I refer to it, or when it self-declares itself, according to the humanist tradition: it is

[3] I am referring here to style transfer techniques in machine learning, as well as the effects of pixel perturbation of trained images to cloak style elements (as seen in Glaze or Nightshade, see Shan et al 2023).

[4] This of course applies in areas outside of art, including all types of education, any type of self-improvement or psychological care, and medical care. Taking an aspirin for a headache, learning how to swim, and going to a therapist all assume a computational ground in which the body or mind can respond and adapt to specific input due to internal structuring.

[5] Arguably, a court composer in 16th century Europe may be happy to accept that their compositions are algorithmic manipulations within a limited space of functional tonality, with the measure of the technical prowess (the degree of elaboration of algorithmic structure) correlating to their ability to channel the divine, or God.

[6] I am not claiming that the inhumanist perspective is specifically suited to study art, but that inhumanist perspective will help normalize an artist’s understandable bias and self-characterization as an agent of some unexplainable sublime. The artist may be in contact with the sublime, but as the sublime-as-explanatory is attached to notions of monetary value, such statements are highly suspect, at best poor or lazy heuristic and at worst the very definition of product fraud by a self-imposed priesthood.

[7] This is culturally specific, and bias may vary between cultures. For instance, the perceived challenge to subjectivity and the self offered by a computational account may be less relevant to practitioners of Zen Buddhism than it would be for a contemporary artist in the Western tradition.

[8] Flowchart diagrams can be seen as encompassing a functional language which seem to allow readers to follow multiple intertwined or concurrent information flows with higher efficiency than natural language. The use of diagrammatic approach to “reduce intelligibility gap” of hidden computational processes is also explored in Lim et al. 2011.

[9] Multiplication and division are for instance, treated conceptually as “product of” and “quotient of”.

[10] Artists, curators, theorists, gallerists, collectors, administrators, as well as the general public.

[11] All collective concepts are inherently intersubjective, as they must be verified by other members of a community.

[12] Much of which, from cell behaviour to simple perception and unconscious muscle movement, are widely accepted as computation in biology and neuroscience.

[13] Any real agent in a physical world will of course have to include action mechanisms as party of perceptual motor system. For the sake of simplicity, and since our virtual agent can be considered as existing in a non-physical world, I have limited their possible reactions to focal attention and cognitive structuring as methods to process input information.

[14] This is referred to in psychology as the construction of a social self.

[15] Bach extends this virtual nature to all conscious phenomena: as a story told about the representation of a physical agent in a representation of a physical world, it is not possible for conscious experience to be anything other than virtual relations in a virtual space (presumably, correlated to a physical reality).

[16] While the example relies on perceptual understanding of 3D physical spatial dimensionality as correlate to how much information can be encoded, the notion of an axis as a one-dimensional space does not need to rely on our physical 3-dimensional experience correlates. For instance, a virtual agency (or inversely, a potential theoretical artwork) can posses a single axis of perceptual information registering light information on a spectrum. This single one-dimensional axis (black axes) can lead to higher level constructs (green axes) such as “shade”, “intensity”, “lightness”, “darkness” etc.

[17] A 1-dimensional space does not have fewer possibilities than 2 dimensional space. As the axis is infinite, there are infinite variations possible. A 2nd axis opens a new infinite set of possibilities, which now interact combinatorially with the infinite possibilities of the first axis. It is not the number of variations that increases, it is the type of possible variation that expands.

[18] I am borrowing from Giuliu Tononi’s notion of qualia as a multi-dimensional shape in multidimensional qualia space.

[19] Compared to the poetic nature of prismatic encoding and decoding in figure 8, figure 10 is more granular and with more explanatory power, yet somehow seems to lose some of its inherent truth value with regard to the direct and non-refractive nature of decoding.

[20] These of course often find a correlation in monetary assessment, but can exist independently: an unsold (whether by intent or accident) work of performance art can still be agreed to have significant non-monetary values in certain communities or markets. Similarly, an academic publication may not have much monetary value but is determined to have value in the marketplace of ideas (if, for instance, the contribution is deemed of value and original).

[21] I do not mean to suggest that value is simply constructed through facts. In the context of Art, feeling is often given a large role in interpretation. However, as discussed previously, without the discovery of a magical lossless communication channel for feelings, or an emotional substrata as a new form of matter, we are left with the sole possibility for feelings to arise as a result of the facts of the work. The relationship between feeling and facts may be clear (“I like this work because it was made by my child”, relating to Who and When) or convoluted (perhaps, in more convoluted relations, facts are still causal of emotions, and emotion can be considered an evolutionary-useful heuristic response to complex problems that do not have clear solution).

[22] A painter and a sculptor can both be said to have a comparably high level of technical skill, although the skills in question are quite different from one another.

[23] Euclidean distance is invoked as it is a simple formula for calculating distance in an n-dimensional space. However, as it articulates the shortest straight line between points, it may not be the most appropriate mathematical distance measurement: the space of art (like the texture of spacetime) might be non-uniform, which would need to be reflected in a different choice of distance measurement.

[24] The representation of artworks in an n-dimensional space with proximity correlating to similarity already occurs with current machine learning techniques. The theoretical aspect of my proposition is that such a spatial representation would be possible with all possible perceptible and conceptual features of a given work.

[25] A hashing function maps data of arbitrary size to fixed or variable length output string of alphanumeric characters.

[26] This approach, as well as the use of hashing functions, is heavily indebted to the analysis of images undertaken in unsupervised machine learning techniques.

[27] The purpose of which range from economic stimulation of artistic sectors, national and international narrative construction, and at times, corrective demographic measures or unofficial reparation efforts.

[28] This reduction is intended to alleviate the reliance on subjective bias and move towards empirically measurable real-world outcomes.

[29] Which are statistically measurable.

[30] The ranking of grievances does not need to be imposed from above, and could even be crowd-sourced or voted on by members of the community. In other words the explicit computability of the process may make it more transparent and democratic.

[31] For instance, a comparison to several overlapping levels of community demographics (of artistic practitioners and general population demographics in a given city, country, or global scales).

[32] This, after all, is the stated goals of corrective efforts.

[33] Including the conception of identity as a discrete calculable entity.

[34] This arguably leads to a loss in confidence in such systems by all practitioners.

[35] Similar to my approach to the term value, power in this sense is not limited to monetary power, although that certainly helps.

[36] This notion was explored artistically in my installation All We’d Ever Need Is One Another, in which an autonomous art factory creates images using two scanners facing one another using randomized colour profiles and scan settings. Each randomly created image is subsequently compared to a database of artworks in commercial and institutional collections, and if it finds a “match” above a certain similarity threshold, the random image is validated-as-art and uploaded to a dedicated online gallery.

[37] Even within the media of painting, an observer would evaluate a pointillist painting with different criteria (or subroutines) than a cubist painting.

References

Bach, Joscha. “Joscha: From Computation to Consciousness (31c3).” Www.youtube.com, 24 Sept. 2015, www.youtube.com/watch?v=lKQ0yaEJjok. Accessed 4 Feb. 2024.

Negarestani, Reza. “The Labor of the Inhuman, Part I: Human.” E-Flux Journal, vol. 52, Oct. 2014, www.e-flux.com/journal/52/59920/the-labor-of-the-inhuman-part-i-human/. Accessed 5 Jan. 2023.

Negarestani, Reza. “The Labor of the Inhuman, Part II: The Inhuman.” E-Flux Journal, vol. 53, Feb. 2014, www.e-flux.com/journal/52/59920/the-labor-of-the-inhuman-part-i-human/. Accessed 5 Jan. 2023.

Negarestani, Reza. “Imagination Inside-Out: Notes on Text-to-Image Models via Husserl & Vaihinger.” Model Is the Message, ed. Mohammad Salemy. &&&/The New Centre for Research & Practice, Berlin, 2023, pp. 21–38.

Lim, Brian et al, “Diagrammatization: Rationalizing with diagrammatic AI explanations for abductive-deductive reasoning on hypotheses”. (2023). 10.48550/arXiv.2302.01241. Accessed March 20, 2023.

Pelowski, Matthew and Akiba, Fimnori, “A model of art perception, evaluation and emotion in transformative aesthetic experience”, New ideas in Psychology 29 (2011), 87.

Shan, Shawn, et al. "Glaze: Protecting Artists from Style Mimicry by {Text-to-Image} Models." 32nd USENIX Security Symposium (USENIX Security 23). 2023.

Well, I’ve been thinking about this idea of art as a self portrait of a society ever since I read it, and it stuck with me because it reframes why we get so defensive when someone asks what a piece means. We want it to be mysterious because that mystery keeps the human at the center. But then I spent an afternoon last year trying to explain to a friend why I cried at a Rothko reproduction, and I realized I was listing contrasts size, color edges, how the red seemed to breathe. That’s just computation dressed in feeling. Later I found some 3D print files on https://www.gambody.com/ for a mechanical dragon from a game I loved as a teenager,…